Our model, CAGE, is designed to both compose and animate scenes from a sparse set of visual features. The model is trained in an unsupervised way from a dataset of unannotated videos.

The field of video generation has expanded significantly in recent years, with controllable and compositional video generation garnering considerable interest. Traditionally, achieving this has relied on leveraging annotations such as text, objects' bounding boxes, and motion cues, which require substantial human effort and thus limit its scalability. Thus, we address the challenge of controllable and compositional video generation without any annotations by introducing a novel unsupervised approach. Once trained from scratch on a dataset of unannotated videos, our model can effectively compose scenes by assembling predefined object parts and animating them in a plausible and controlled manner.

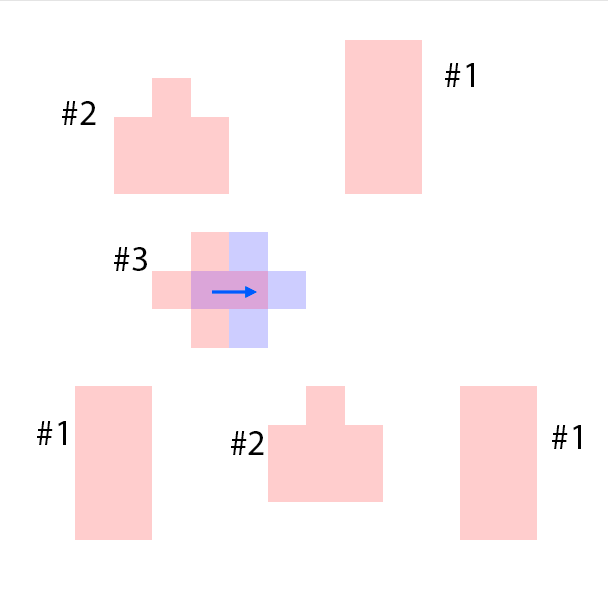

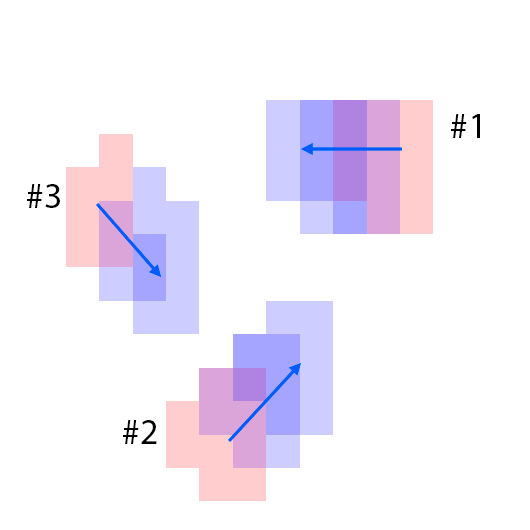

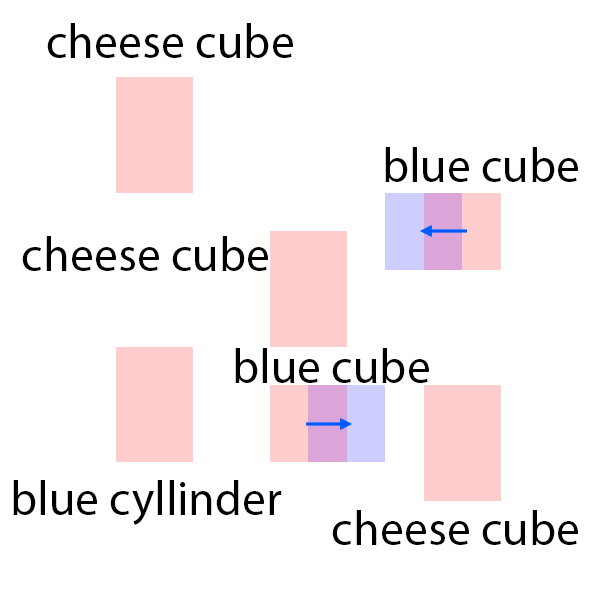

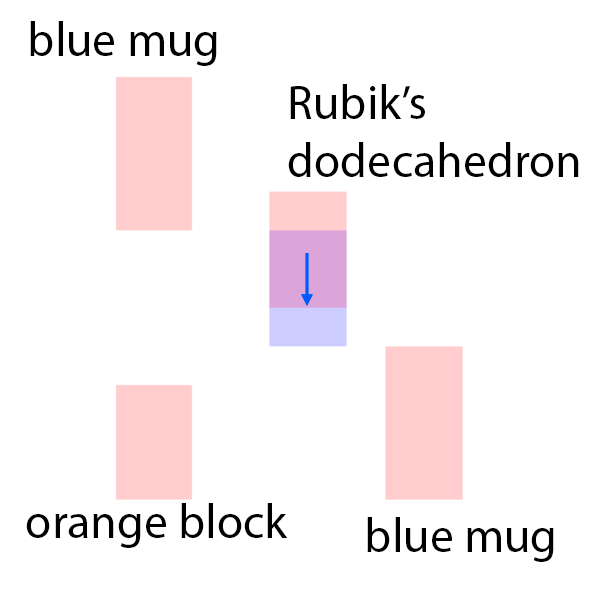

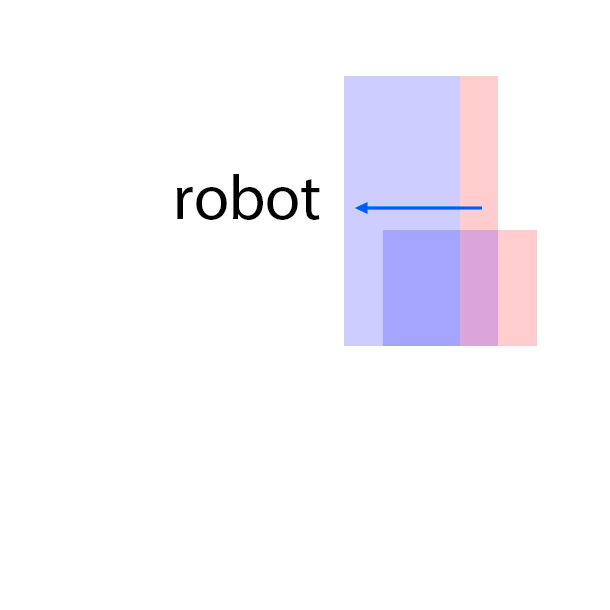

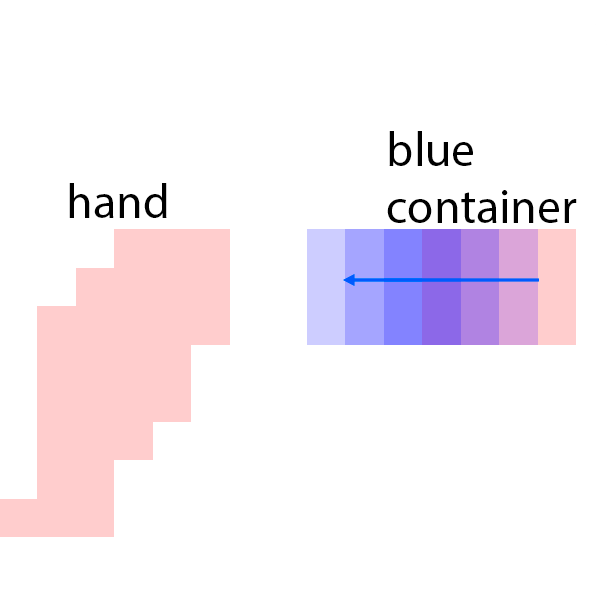

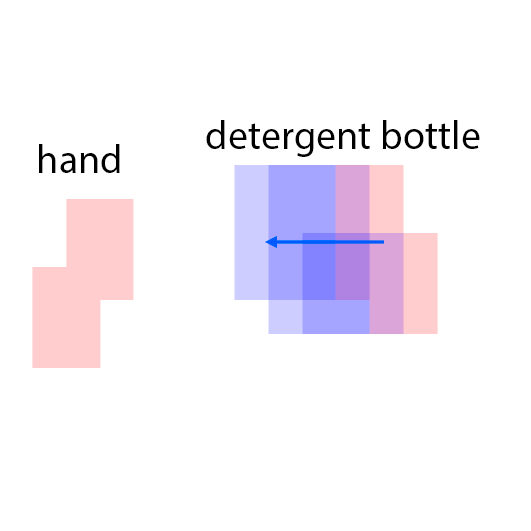

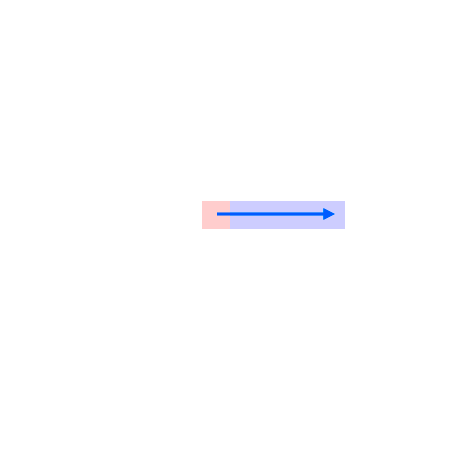

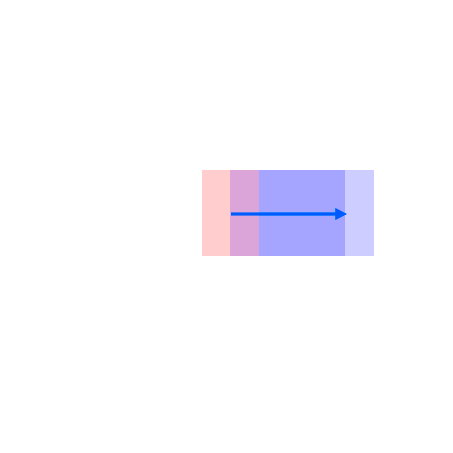

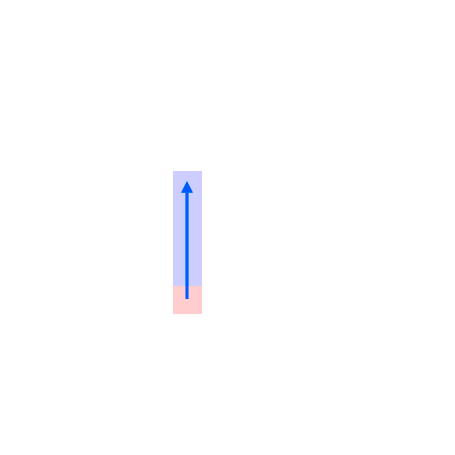

The core innovation of our method lies in its training process, where video generation is conditioned on a randomly selected subset of pre-trained self-supervised local features. This conditioning compels the model to learn how to inpaint the missing information in the video both spatially and temporally, thereby resulting in understanding the inherent compositionality and the dynamics of the scene. The abstraction level and the imposed invariance of the conditioning to minor visual perturbations enable control over object motion by simply moving the features to the desired future locations. We call our model CAGE, which stands for visual Composition and Animation for video GEneration.

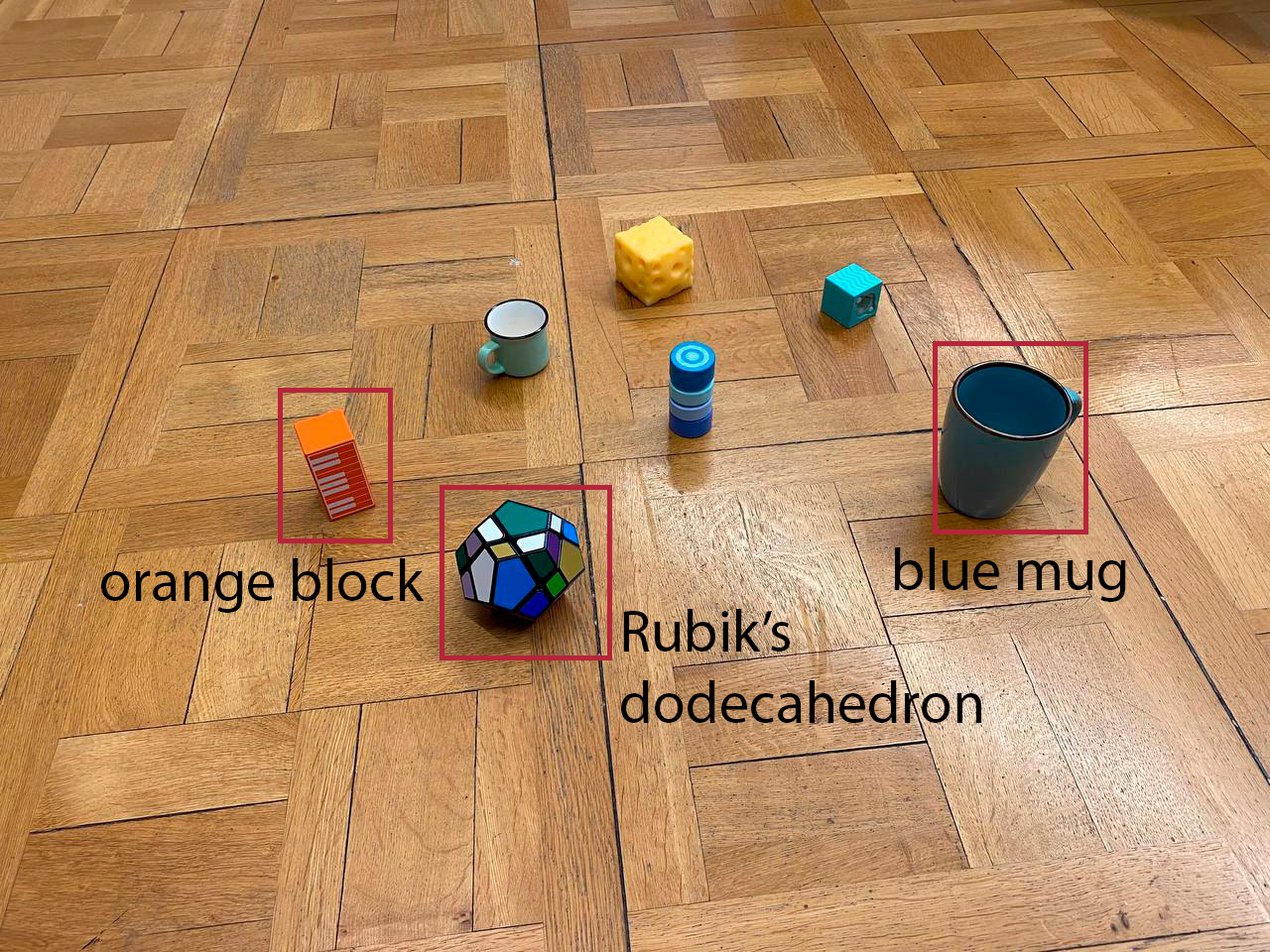

We conduct extensive experiments to validate the effectiveness of CAGE across various scenarios, demonstrating its capability to accurately follow the control and to generate high-quality videos that exhibit coherent scene composition and realistic animation.

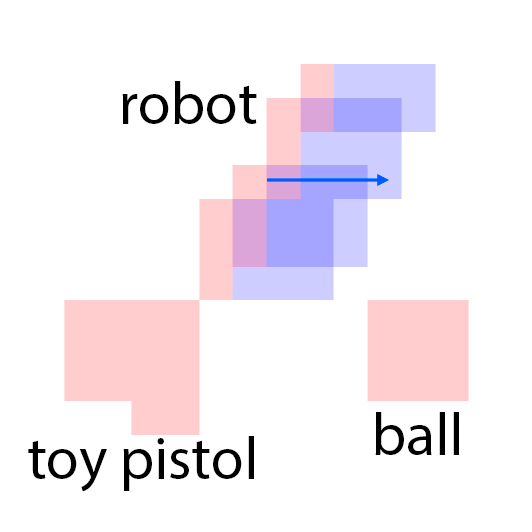

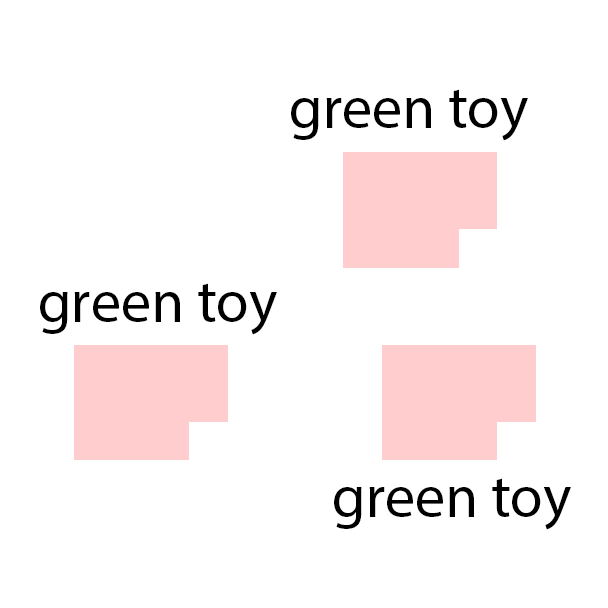

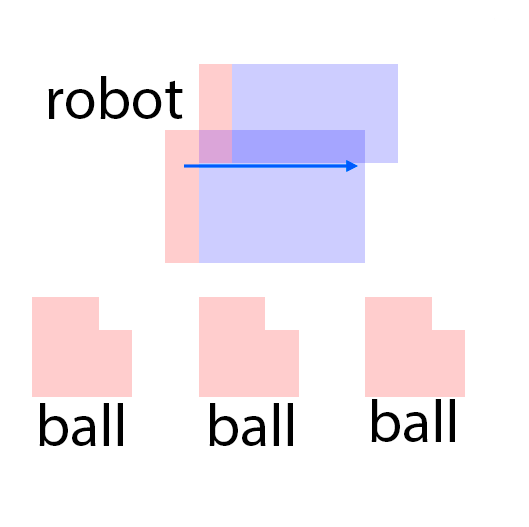

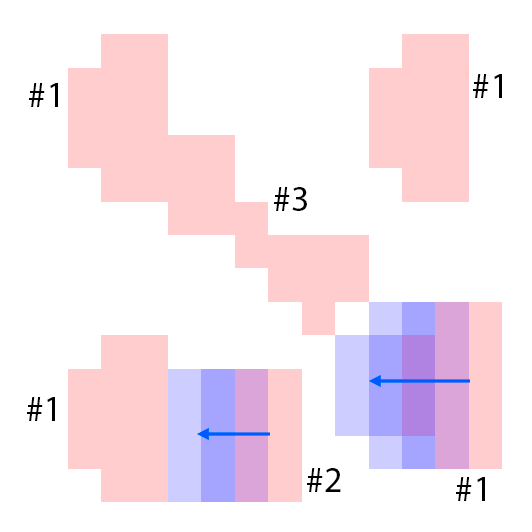

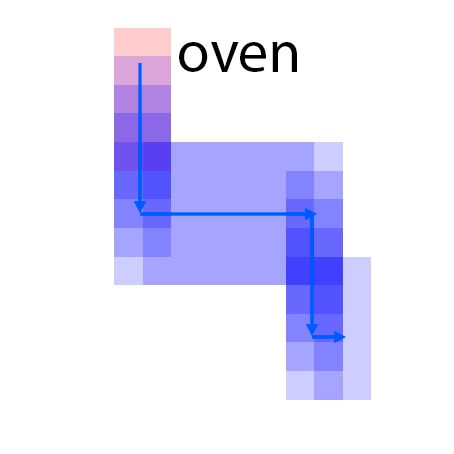

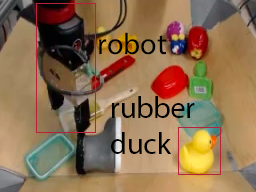

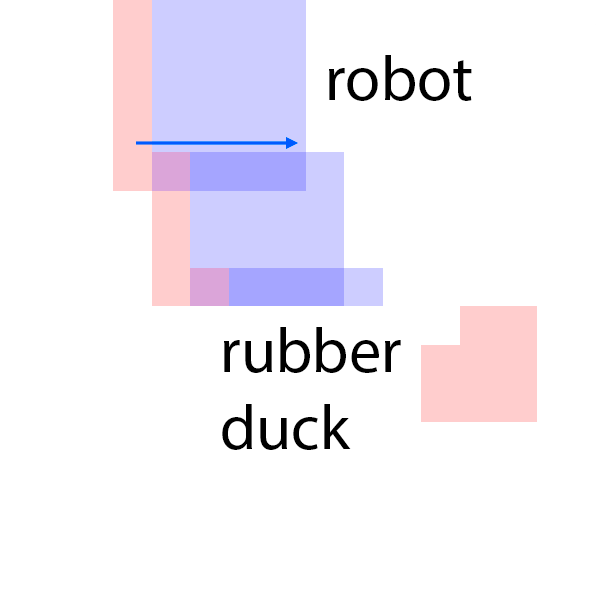

The overall pipeline of CAGE. The model takes all the colored frames and processes them equally and in parallel. The pipeline for a single frame (in red) is illustrated. CAGE is trained to predict the denoising direction for the future frames in the Conditional Flow Matching framework conditioned on the past frames (context and reference) and sparse random sets of DINOv2 features. The frames communicate with each other via the Temporal Blocks while being separately processed by the Spatial Blocks. The controls are incorporated through Cross-Attention.

In order to prevent overfitting to the position information that is present in DINOv2 features as well as to impose some scale invariance, the features are calculated on random crops and then pasted back to their original locations.

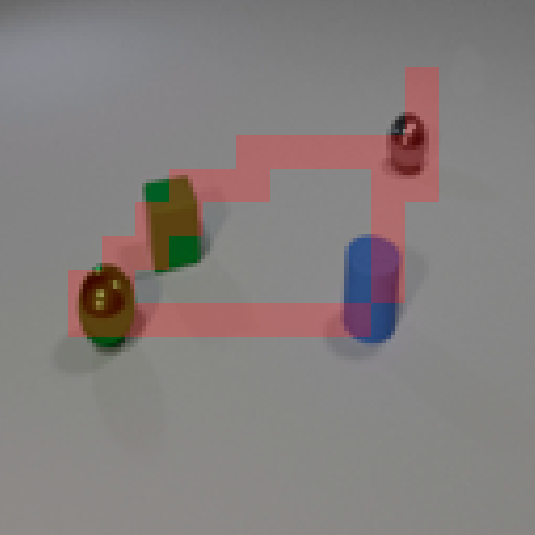

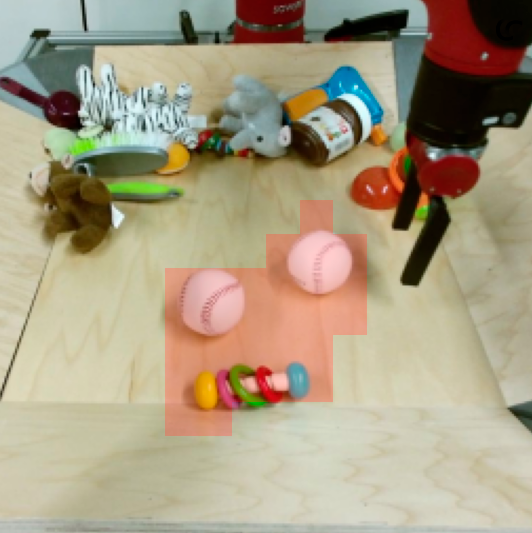

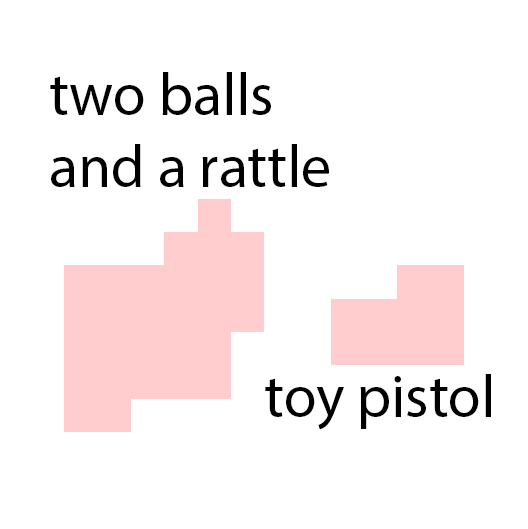

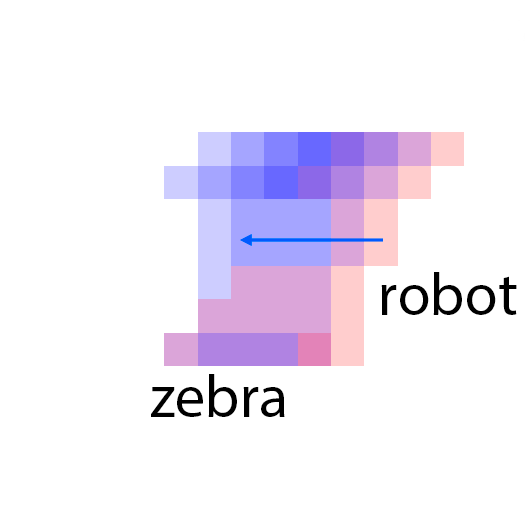

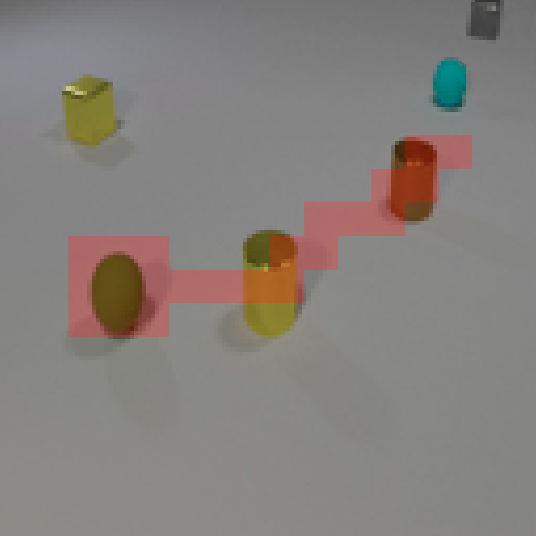

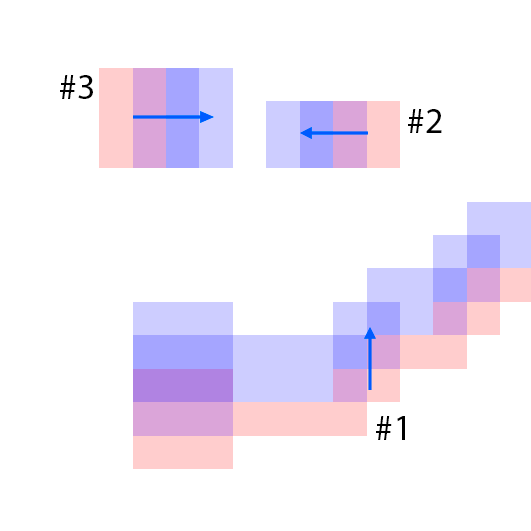

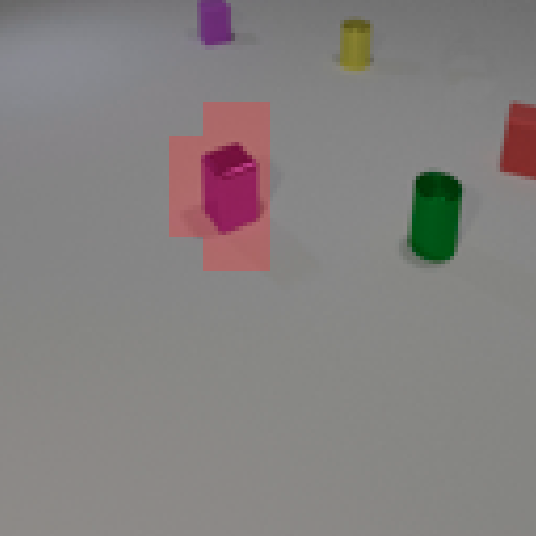

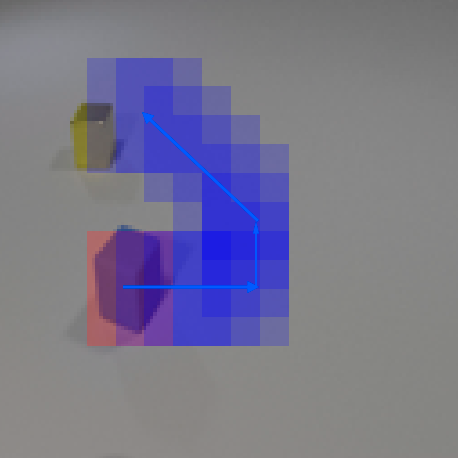

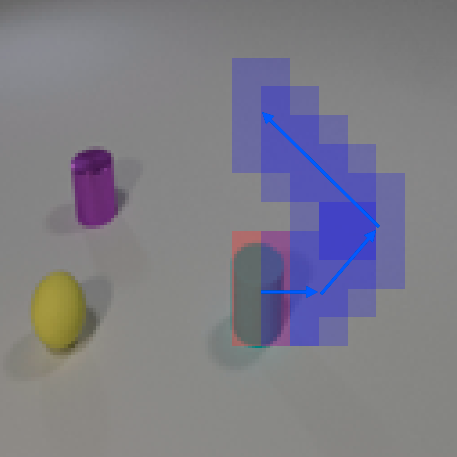

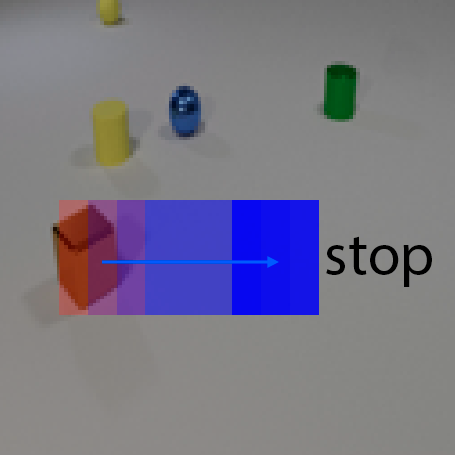

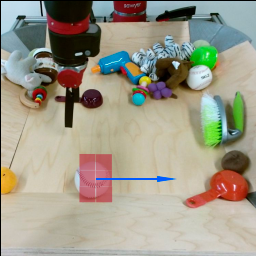

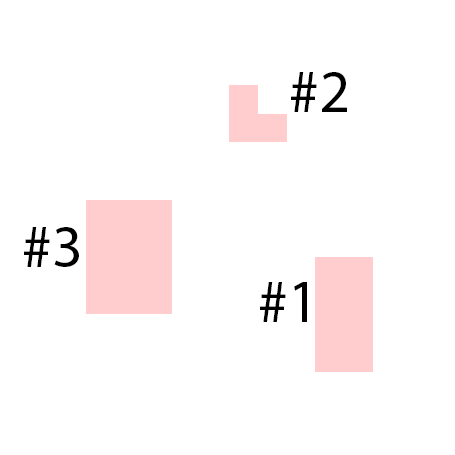

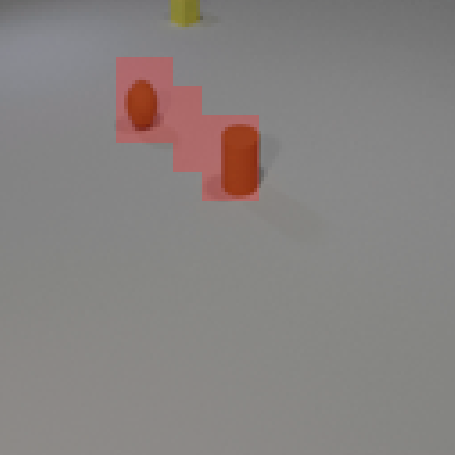

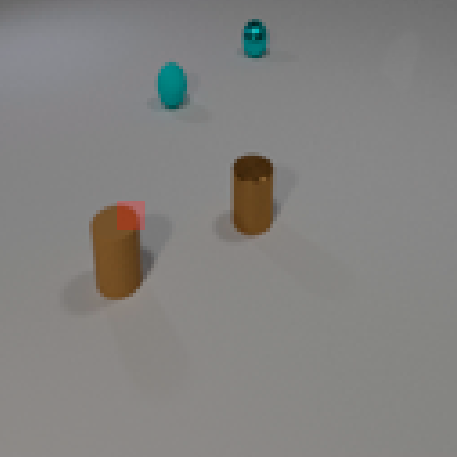

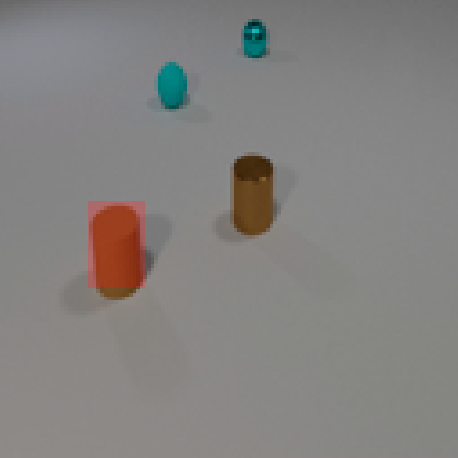

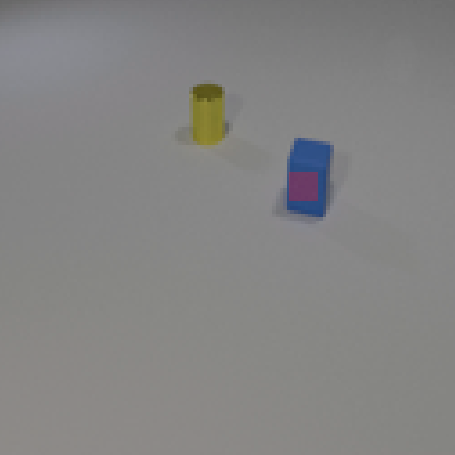

Here we demonstrate some generalization of CAGE to out of distribution controls. Those include curved trajectories and sudden changes in velocity in CLEVRER, and moving background objects in BAIR. Notice that sometimes our models manage to cast the o.o.d. controls to in distribution ones (e.g. by introducing new objects in CLEVRER that collide with the controlled object, the 2nd example in the first row). This shows the emergent planning capabilities of CAGE.

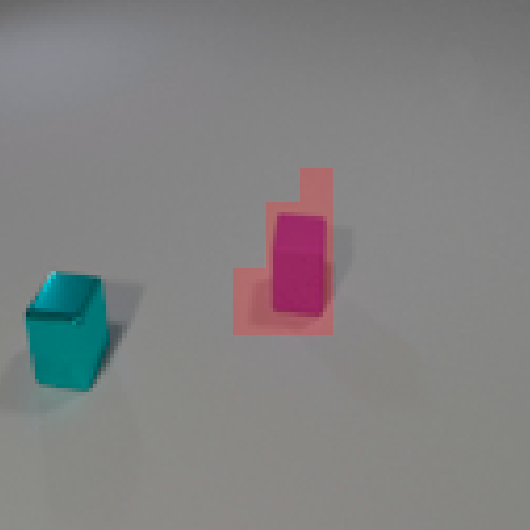

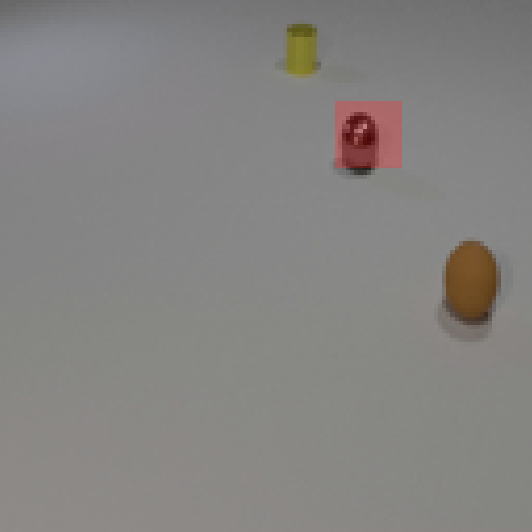

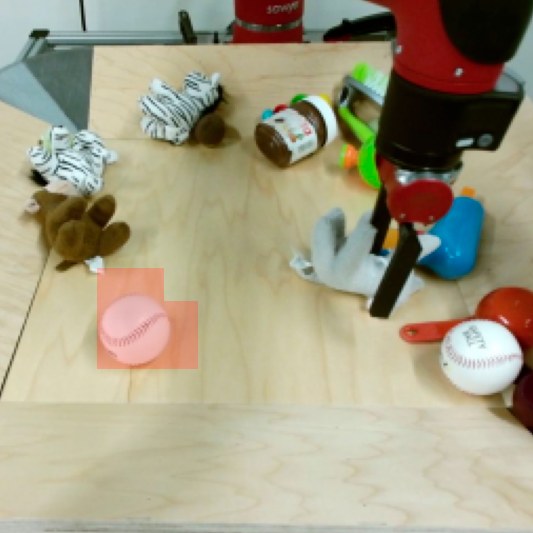

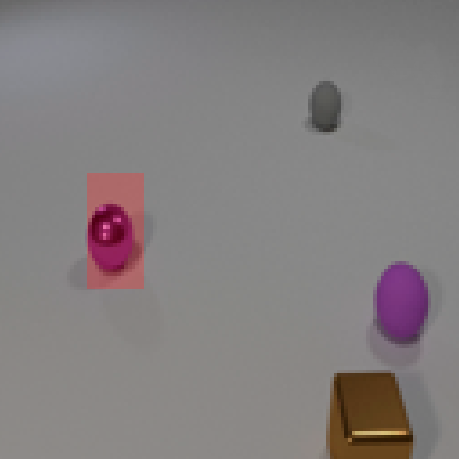

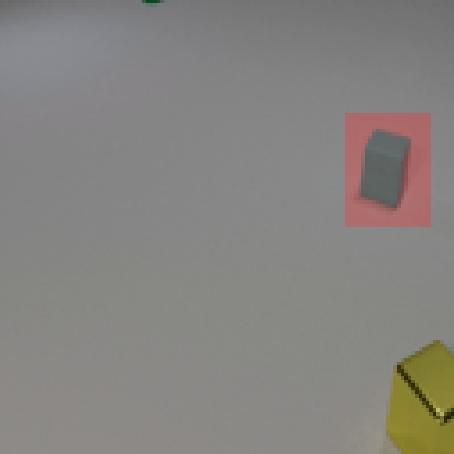

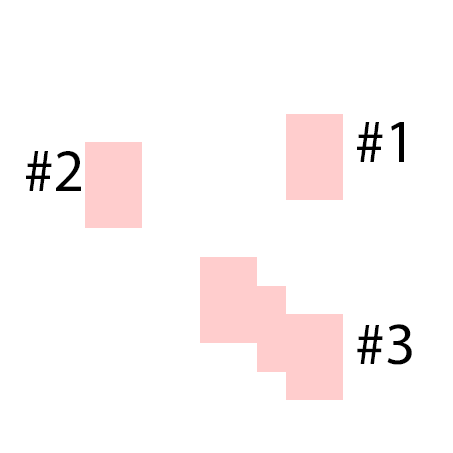

This section shows some comparisons between CAGE (columns 1 for the controls and 2 for the generated videos) to the prior work YODA (column 3).

Although in our controllability evaluation we mostly show short videos, thanks to its autoregressive nature CAGE is able to generate longer videos at inference. Here are a few examples of 512-frame-long videos generated with CAGE.

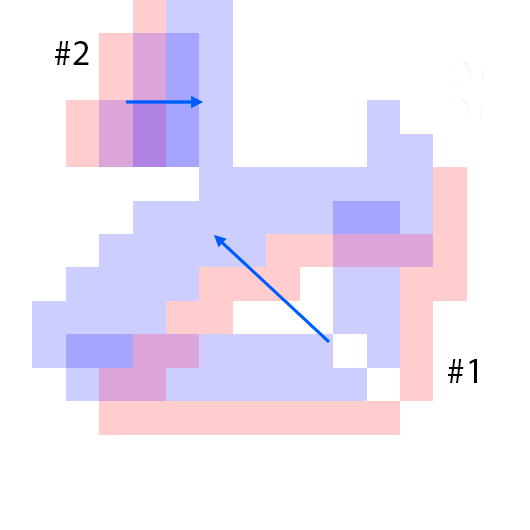

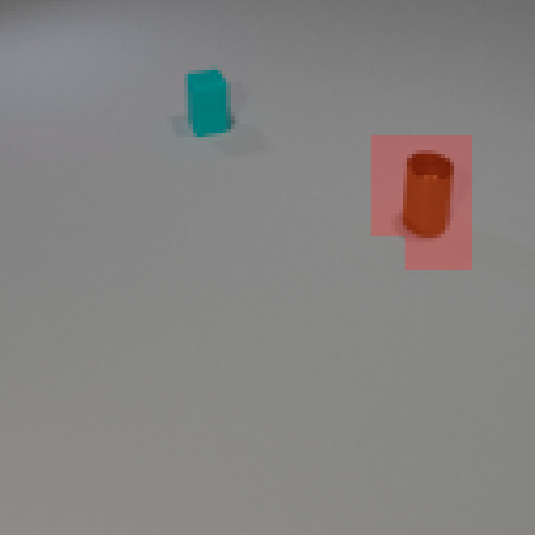

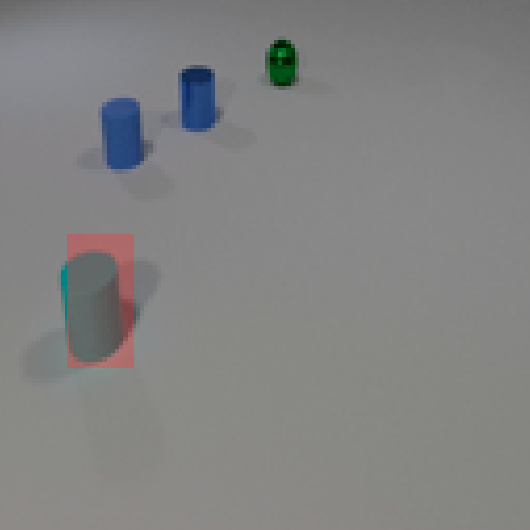

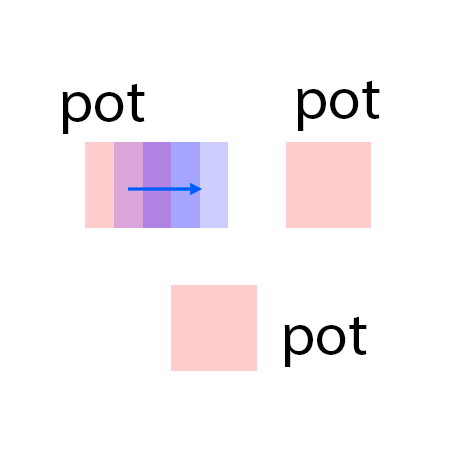

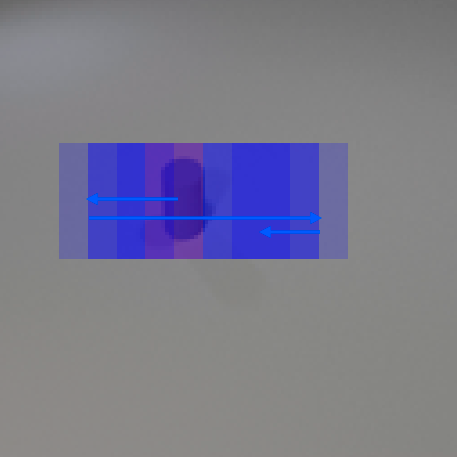

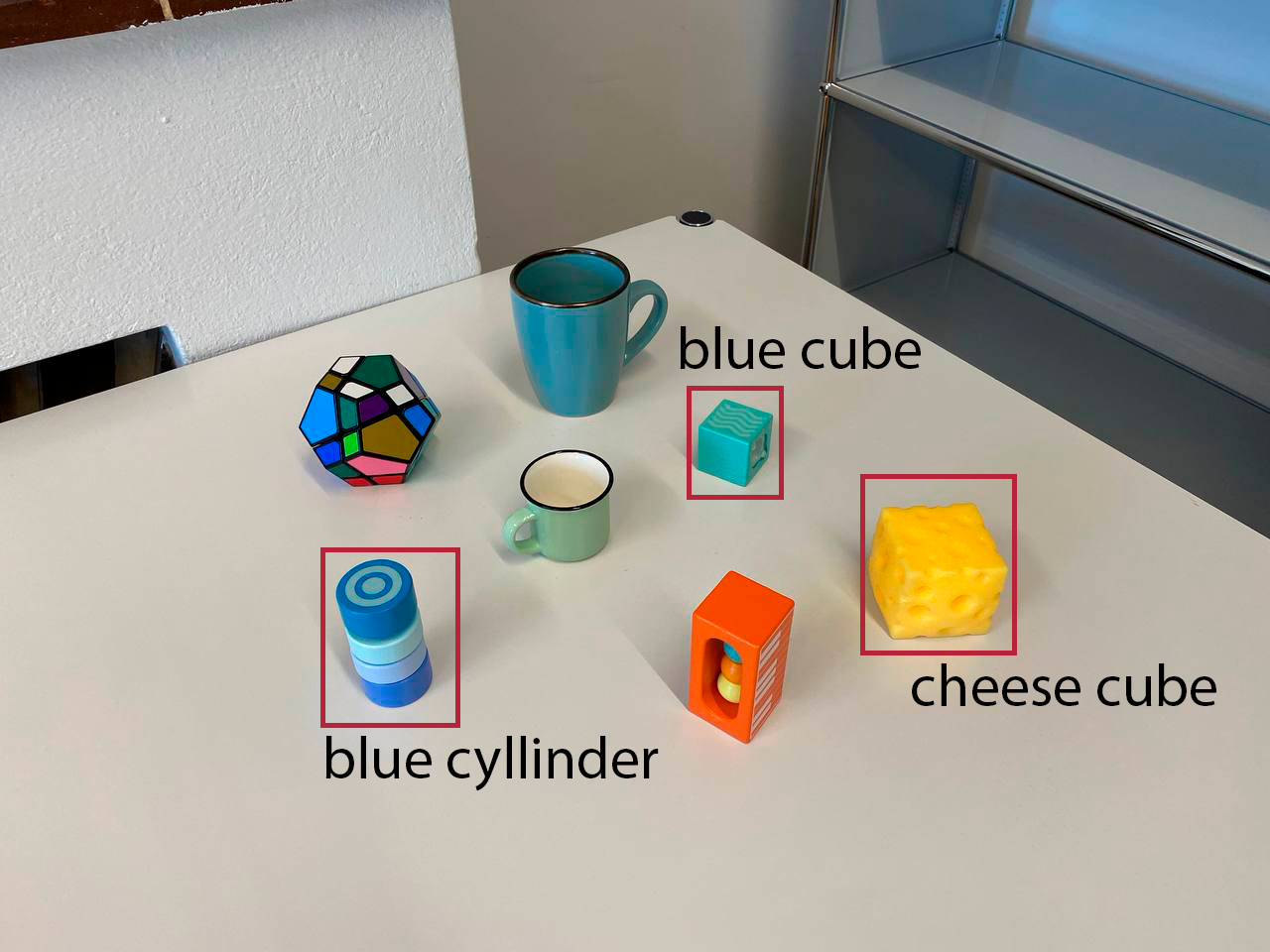

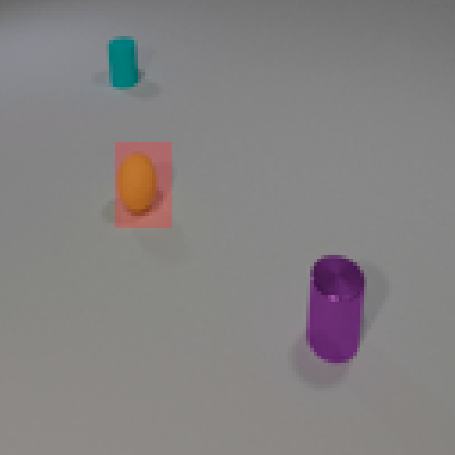

Here we show some generated sequences with only one vs many DINOv2 token provided per object. While CAGE is pretty robust to the number of controls provided and can inpaint the missing information, the sequences generated conditioned on more tokens are more consistent with the source image (e.g. in the second example on the second slide the blue cube does not rotate with more tokens provided, i.e. the pose of the object is fixed given the features).

Coming soon...